Uncategorized

Web3 Has a Memory Problem — And We Finally Have a Fix

Web3 has a memory problem. Not in the “we forgot something” sense, but in the core architectural sense. It doesn’t have a real memory layer.

Blockchains today don’t look completely alien compared to traditional computers, but a core foundational aspect of legacy computing is still missing: A memory layer built for decentralization that will support the next iteration of the internet.

Muriel Médard is a speaker at Consensus 2025 May 14-16. Register to get your ticket here.

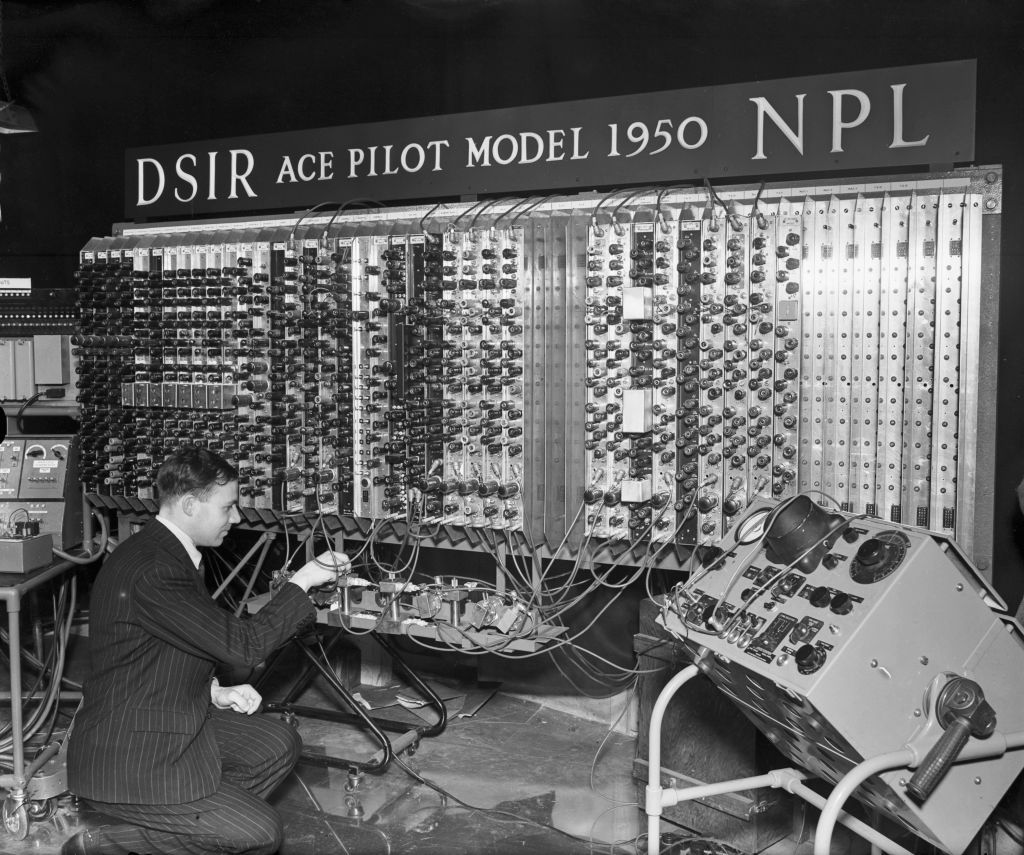

After World War II, John von Neumann laid out the architecture for modern computers. Every computer needs input and output, a CPU for control and arithmetic, and memory to store the latest version data, along with a “bus” to retrieve and update that data in the memory. Commonly known as RAM, this architecture has been the foundation of computing for decades.

At its core, Web3 is a decentralized computer — a “world computer.” At the higher layers, it’s fairly recognizable: operating systems (EVM, SVM) running on thousands of decentralized nodes, powering decentralized applications and protocols.

But, when you dig deeper, something’s missing. The memory layer essential for storing, accessing and updating short-term and long term data, doesn’t look like the memory bus or memory unit von Neumann envisioned.

Instead, it’s a mashup of different best-effort approaches to achieve this purpose, and the results are overall messy, inefficient and hard to navigate.

Here’s the problem: if we’re going to build a world computer that’s fundamentally different from the von Neumann model, there better be a really good reason to do so. As of right now, Web3’s memory layer isn’t just different, it’s convoluted and inefficient. Transactions are slow. Storage is sluggish and costly. Scaling for mass adoption with this current approach is nigh impossible. And, that’s not what decentralization was supposed to be about.

But there is another way.

A lot of people in this space are trying their best to work around this limitation and we’re at a point now where the current workaround solutions just cannot keep up. This is where using algebraic coding, which makes use of equations to represent data for efficiency, resilience and flexibility, comes in.

The core problem is this: how do we implement decentralized code for Web3?

A new memory infrastructure

This is why I took the leap from academia where I held the role of MIT NEC Chair and Professor of Software Science and Engineering to dedicate myself and a team of experts in advancing high-performance memory for Web3.

I saw something bigger: the potential to redefine how we think about computing in a decentralized world.

My team at Optimum is creating decentralized memory that works like a dedicated computer. Our approach is powered by Random Linear Network Coding (RLNC), a technology developed in my MIT lab over nearly two decades. It’s a proven data coding method that maximizes throughput and resilience in high-reliability networks from industrial systems to the internet.

Data coding is the process of converting information from one format to another for efficient storage, transmission or processing. Data coding has been around for decades and there are many iterations of it in use in networks today. RLNC is the modern approach to data coding built specifically for decentralized computing. This scheme transforms data into packets for transmission across a network of nodes, ensuring high speed and efficiency.

With multiple engineering awards from top global institutions, more than 80 patents, and numerous real-world deployments, RLNC is no longer just a theory. RLNC has garnered significant recognition, including the 2009 IEEE Communications Society and Information Theory Society Joint Paper Award for the work «A Random Linear Network Coding Approach to Multicast.» RLNC’s impact was acknowledged with the IEEE Koji Kobayashi Computers and Communications Award in 2022.

RLNC is now ready for decentralized systems, enabling faster data propagation, efficient storage, and real-time access, making it a key solution for Web3’s scalability and efficiency challenges.

Why this matters

Let’s take a step back. Why does all of this matter? Because we need memory for the world computer that’s not just decentralized but also efficient, scalable and reliable.

Currently, blockchains rely on best-effort, ad hoc solutions that achieve partially what memory in high-performance computing does. What they lack is a unified memory layer that encompasses both the memory bus for data propagation and the RAM for data storage and access.

The bus part of the computer should not become the bottleneck, as it does now. Let me explain.

“Gossip” is the common method for data propagation in blockchain networks. It is a peer-to-peer communication protocol in which nodes exchange information with random peers to spread data across the network. In its current implementation, it struggles at scale.

Imagine you need 10 pieces of information from neighbors who repeat what they’ve heard. As you speak to them, at first you get new information. But as you approach nine out of 10, the chance of hearing something new from a neighbor drops, making the final piece of information the hardest to get. Chances are 90% that the next thing you hear is something you already know.

This is how blockchain gossip works today — efficient early on, but redundant and slow when trying to complete the information sharing. You would have to be extremely lucky to get something new every time.

With RLNC, we get around the core scalability issue in current gossip. RLNC works as though you managed to get extremely lucky, so every time you hear info, it just happens to be info that is new to you. That means much greater throughput and much lower latency. This RLNC-powered gossip is our first product, which validators can implement through a simple API call to optimize data propagation for their nodes.

Let us now examine the memory part. It helps to think of memory as dynamic storage, like RAM in a computer or, for that matter, our closet. Decentralized RAM should mimic a closet; it should be structured, reliable, and consistent. A piece of data is either there or not, no half-bits, no missing sleeves. That’s atomicity. Items stay in the order they were placed — you might see an older version, but never a wrong one. That’s consistency. And, unless moved, everything stays put; data doesn’t disappear. That’s durability.

Instead of the closet, what do we have? Mempools are not something we keep around in computers, so why do we do that in Web3? The main reason is that there is not a proper memory layer. If we think of data management in blockchains as managing clothes in our closet, a mempool is like having a pile of laundry on the floor, where you are not sure what is in there and you need to rummage.

Current delays in transaction processing can be extremely high for any single chain. Citing Ethereum as an example, it takes two epochs or 12.8 minutes to finalize any single transaction. Without decentralized RAM, Web3 relies on mempools, where transactions sit until they’re processed, resulting in delays, congestion and unpredictability.

Full nodes store everything, bloating the system and making retrieval complex and costly. In computers, the RAM keeps what is currently needed, while less-used data moves to cold storage, maybe in the cloud or on disk. Full nodes are like a closet with all the clothes you ever wore (from everything you’ve ever worn as a baby until now).

This is not something we do on our computers, but they exist in Web3 because storage and read/write access aren’t optimized. With RLNC, we create decentralized RAM (deRAM) for timely, updateable state in a way that is economical, resilient and scalable.

DeRAM and data propagation powered by RLNC can solve Web3’s biggest bottlenecks by making memory faster, more efficient, and more scalable. It optimizes data propagation, reduces storage bloat, and enables real-time access without compromising decentralization. It’s long been a key missing piece in the world computer, but not for long.

Uncategorized

Wall Street Bank Citigroup Sees Ether Falling to $4,300 by Year-End

Wall Street giant Citigroup (C) has launched new ether (ETH) forecasts, calling for $4,300 by year-end, which would be a decline from the current $4,515.

That’s the base case though. The bank’s full assessment is wide enough to drive an army regiment through, with the bull case being $6,400 and the bear case $2,200.

The bank analysts said network activity remains the key driver of ether’s value, but much of the recent growth has been on layer-2s, where value “pass-through” to Ethereum’s base layer is unclear.

Citi assumes just 30% of layer-2 activity contributes to ether’s valuation, putting current prices above its activity-based model, likely due to strong inflows and excitement around tokenization and stablecoins.

A layer 1 network is the base layer, or the underlying infrastructure of a blockchain. Layer 2 refers to a set of off-chain systems or separate blockchains built on top of layer 1s.

Exchange-traded fund (ETF) flows, though smaller than bitcoin’s (BTC), have a bigger price impact per dollar, but Citi expects them to remain limited given ether’s smaller market cap and lower visibility with new investors.

Macro factors are seen adding only modest support. With equities already near the bank’s S&P 500 6,600 target, the analysts do not expect major upside from risk assets.

Read more: Ether Bigger Beneficiary of Digital Asset Treasuries Than Bitcoin or Solana: StanChart

Uncategorized

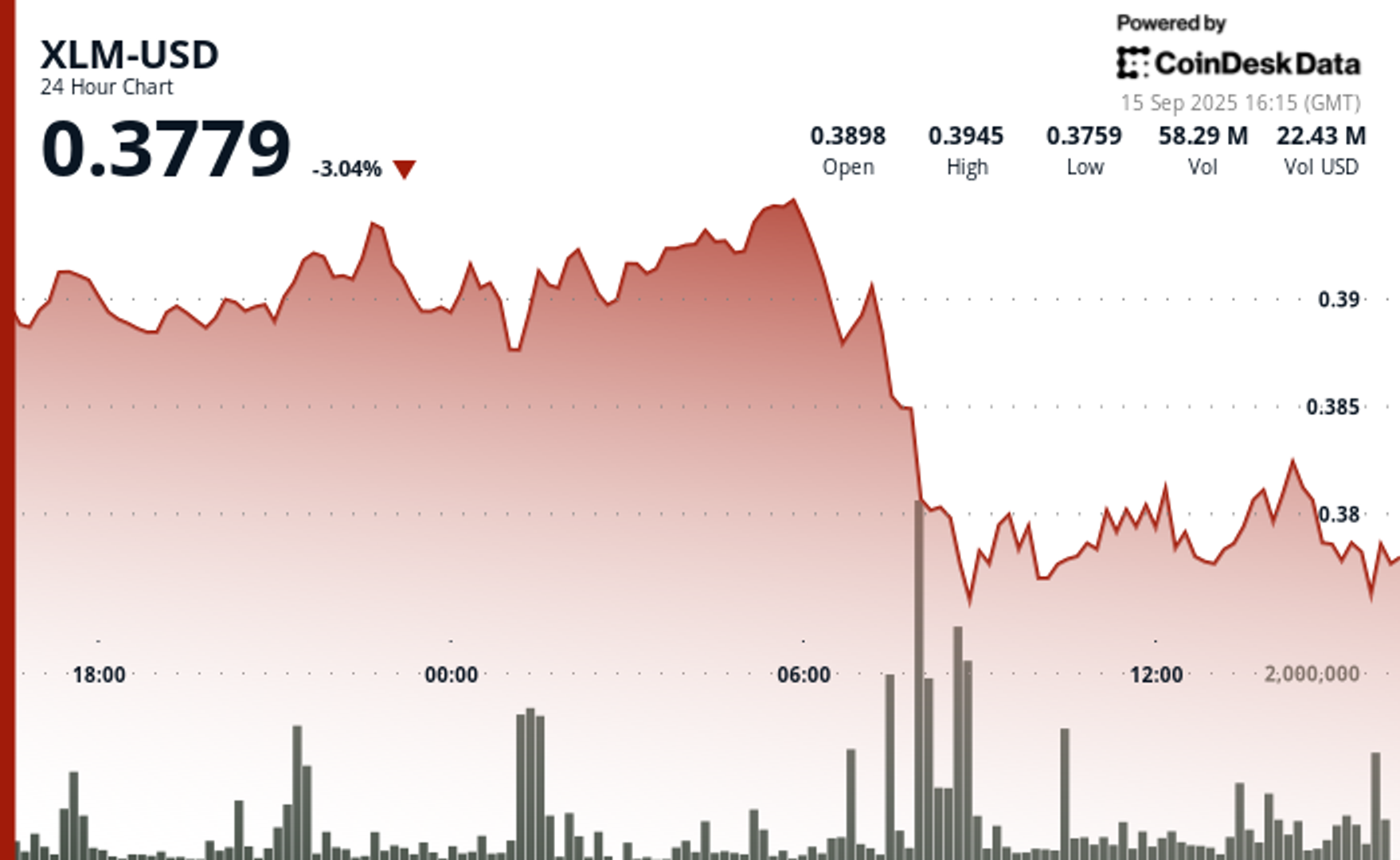

XLM Sees Heavy Volatility as Institutional Selling Weighs on Price

Stellar’s XLM token endured sharp swings over the past 24 hours, tumbling 3% as institutional selling pressure dominated order books. The asset declined from $0.39 to $0.38 between September 14 at 15:00 and September 15 at 14:00, with trading volumes peaking at 101.32 million—nearly triple its 24-hour average. The heaviest liquidation struck during the morning hours of September 15, when XLM collapsed from $0.395 to $0.376 within two hours, establishing $0.395 as firm resistance while tentative support formed near $0.375.

Despite the broader downtrend, intraday action highlighted moments of resilience. From 13:15 to 14:14 on September 15, XLM staged a brief recovery, jumping from $0.378 to a session high of $0.383 before closing the hour at $0.380. Trading volume surged above 10 million units during this window, with 3.45 million changing hands in a single minute as bulls attempted to push past resistance. While sellers capped momentum, the consolidation zone around $0.380–$0.381 now represents a potential support base.

Market dynamics suggest distribution patterns consistent with institutional profit-taking. The persistent supply overhead has reinforced resistance at $0.395, where repeated rally attempts have failed, while the emergence of support near $0.375 reflects opportunistic buying during liquidation waves. For traders, the $0.375–$0.395 band has become the key battleground that will define near-term direction.

Technical Indicators

- XLM retreated 3% from $0.39 to $0.38 during the previous 24-hours from 14 September 15:00 to 15 September 14:00.

- Trading volume peaked at 101.32 million during the 08:00 hour, nearly triple the 24-hour average of 24.47 million.

- Strong resistance established around $0.395 level during morning selloff.

- Key support emerged near $0.375 where buying interest materialized.

- Price range of $0.019 representing 5% volatility between peak and trough.

- Recovery attempts reached $0.383 by 13:00 before encountering selling pressure.

- Consolidation pattern formed around $0.380-$0.381 zone suggesting new support level.

Disclaimer: Parts of this article were generated with the assistance from AI tools and reviewed by our editorial team to ensure accuracy and adherence to our standards. For more information, see CoinDesk’s full AI Policy.

Uncategorized

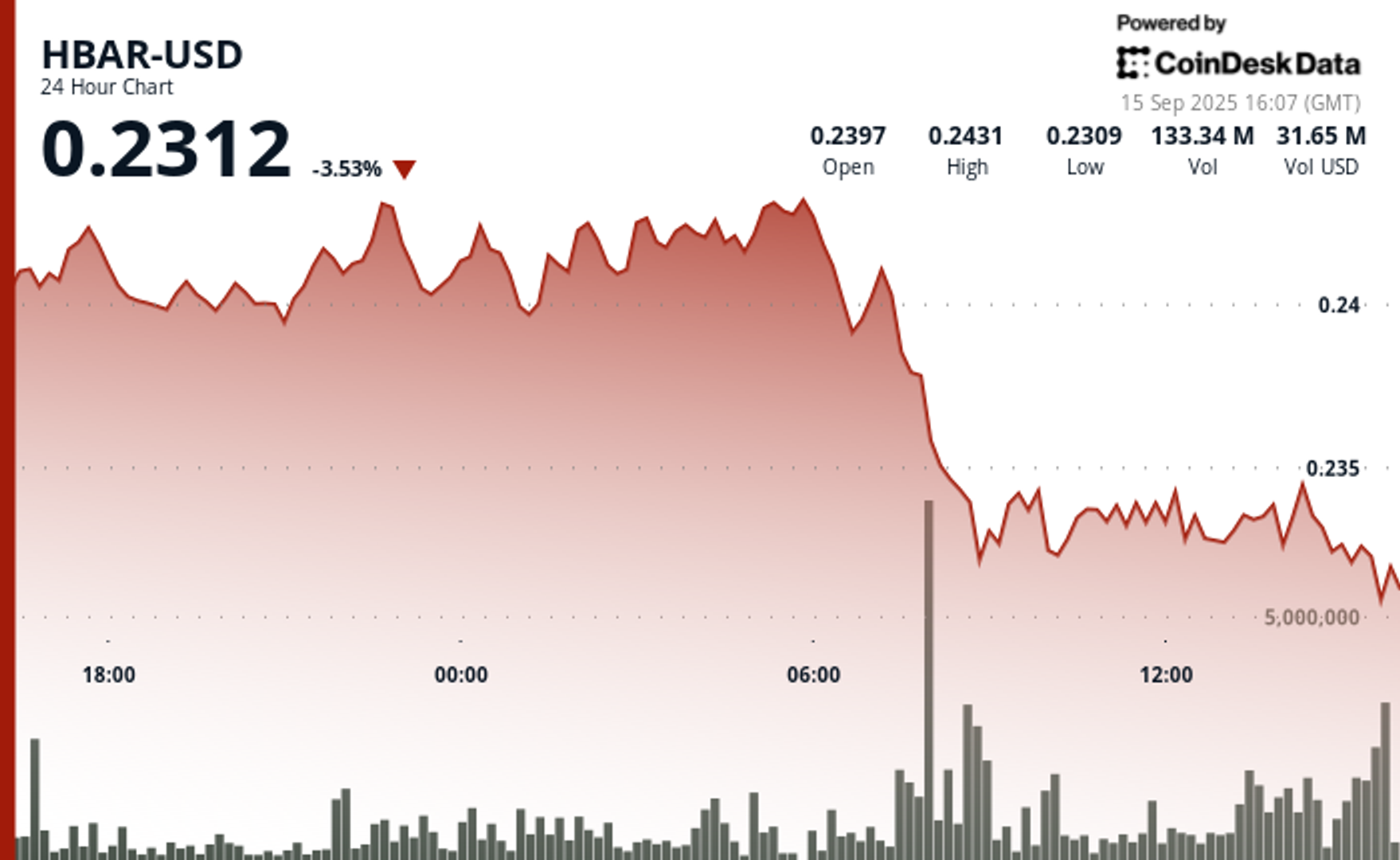

HBAR Tumbles 5% as Institutional Investors Trigger Mass Selloff

Hedera Hashgraph’s HBAR token endured steep losses over a volatile 24-hour window between September 14 and 15, falling 5% from $0.24 to $0.23. The token’s trading range expanded by $0.01 — a move often linked to outsized institutional activity — as heavy corporate selling overwhelmed support levels. The sharpest move came between 07:00 and 08:00 UTC on September 15, when concentrated liquidation drove prices lower after days of resistance around $0.24.

Institutional trading volumes surged during the session, with more than 126 million tokens changing hands on the morning of September 15 — nearly three times the norm for corporate flows. Market participants attributed the spike to portfolio rebalancing by large stakeholders, with enterprise adoption jitters and mounting regulatory scrutiny providing the backdrop for the selloff.

Recovery efforts briefly emerged during the final hour of trading, when corporate buyers tested the $0.24 level before retreating. Between 13:32 and 13:35 UTC, one accumulation push saw 2.47 million tokens deployed in an effort to establish a price floor. Still, buying momentum ultimately faltered, with HBAR settling back into support at $0.23.

The turbulence underscores the token’s vulnerability to institutional distribution events. Analysts point to the failed breakout above $0.24 as confirmation of fresh resistance, with $0.23 now serving as the critical support zone. The surge in volume suggests major corporate participants are repositioning ahead of regulatory shifts, leaving HBAR’s near-term outlook dependent on whether enterprise buyers can mount sustained defenses above key support.

Technical Indicators Summary

- Corporate resistance levels crystallized at $0.24 where institutional selling pressure consistently overwhelmed enterprise buying interest across multiple trading sessions.

- Institutional support structures emerged around $0.23 levels where corporate buying programs have systematically absorbed selling pressure from retail and smaller institutional participants.

- The unprecedented trading volume surge to 126.38 million tokens during the 08:00 morning session reflects enterprise-scale distribution strategies that overwhelmed corporate demand across major trading platforms.

- Subsequent institutional momentum proved unsustainable as systematic selling pressure resumed between 13:37-13:44, driving corporate participants back toward $0.23 support zones with sustained volumes exceeding 1 million tokens, indicating ongoing institutional distribution.

- Final trading periods exhibited diminishing corporate activity with zero recorded volume between 13:13-14:14, suggesting institutional participants adopted defensive positioning strategies as HBAR consolidated at $0.23 amid enterprise uncertainty.

Disclaimer: Parts of this article were generated with the assistance from AI tools and reviewed by our editorial team to ensure accuracy and adherence to our standards. For more information, see CoinDesk’s full AI Policy.

-

Business11 месяцев ago

Business11 месяцев ago3 Ways to make your business presentation more relatable

-

Fashion11 месяцев ago

Fashion11 месяцев agoAccording to Dior Couture, this taboo fashion accessory is back

-

Entertainment11 месяцев ago

Entertainment11 месяцев ago10 Artists who retired from music and made a comeback

-

Entertainment11 месяцев ago

Entertainment11 месяцев ago\’Better Call Saul\’ has been renewed for a fourth season

-

Entertainment11 месяцев ago

Entertainment11 месяцев agoNew Season 8 Walking Dead trailer flashes forward in time

-

Business11 месяцев ago

Business11 месяцев ago15 Habits that could be hurting your business relationships

-

Entertainment11 месяцев ago

Entertainment11 месяцев agoMeet Superman\’s grandfather in new trailer for Krypton

-

Entertainment11 месяцев ago

Entertainment11 месяцев agoDisney\’s live-action Aladdin finally finds its stars